This post walks you through how to backup a PostgreSQL database to an AWS s3 bucket.

There are a few installations we’ll need to make before allowing our on-prem Postgres server to communicate with AWS.

Install pip

- Use the

curlcommand to download the installation script. The following command uses the-O(uppercase “O”) parameter to specify that the downloaded file is to be stored in the current folder using the same name it has on the remote host:curl -O https://bootstrap.pypa.io/get-pip.py - Run the script with Python to download and install the latest version of

pipand other required support packages:python36 get-pip.py --user

When you include the--userswitch, the script installspipto the path~/.local/bin. - Ensure the folder that contains

pipis part of yourPATHvariable.ls -a ~ - Add an export command at the end of your profile script that’s similar to the following example.

source ~/.bash_profile - Now you can test to verify that

pipis installed correctly.pip3 --version

Install the AWS CLI with pip

- Use

pipto install the AWS CLI.pip3 install awscli --upgrade --user - Verify that the AWS CLI installed correctly.

aws --version

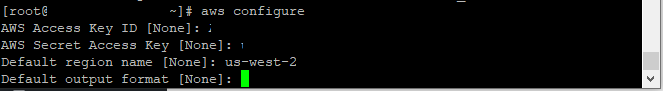

Now that we have AWS CLI installed, we can configure our new client. You will need AWS Access Key ID, AWS Secret Access Key, Default Region Name and Default Output Format This information you can go to the IAM AWS Section.

aws configure

To view your s3 buckets use the following:

aws s3 ls

Now that AWS is configured and we can view our s3 buckets, let’s make a backup:

PGPASSWORD="password" ./pg_dump --no-owner -h localhost -U databasename > ~/databasename.sql

To view the backup file use the following:

cd /root

dir

Now that we have a backup, let’s create an AWS s3 bucket to store them in:

aws s3api create-bucket --bucket postgres-backups --region us-west-2 --create-bucket-configuration LocationConstraint=us-west-2

Back in AWS, you can see the new bucket:

Once the new bucket has been created, let’s push the backup we took earlier to this bucket.

aws s3 cp databasename.sql s3://postgres-backups/